I have been a Hashicorp fan boy for a couple of years now. I am impressed, and happy with pretty much everything they have done from Vagrant to Consul and more. In short they make the DevOps world a better place. That being said this article is about the aptly named Terraform product. Here is how Hashicorp describes Terraform in their own words…

“Terraform enables you to safely and predictably create, change, and improve production infrastructure. It is an open source tool that codifies APIs into declarative configuration files that can be shared amongst team members, treated as code, edited, reviewed, and versioned.”

Interestingly, enough it doesn’t point out, but in a way implies it by omitting anything about providers that Terraform is multi-cloud (or cloud agnostic). Terraform works with AWS, GCP, Azure and Openstack. In this article we will be covering how to use Terraform with AWS.

Step 1, download Terraform, I am not going to cover that part 😉

https://www.terraform.io/downloads.html

Step 2, Configuration…

Configuration

Hashicorp uses their own configuration language for Terraform, it is fully JSON compatible, which is nice.. The details are covered here https://github.com/hashicorp/hcl.

After downloading and installing Terraform, its time to start generating the configs.

AWS IAM Keys

AWS keys are required to do anything with Terraform. You can read about how to generate an access key / secret key for a user here : http://docs.aws.amazon.com/IAM/latest/UserGuide/id_credentials_access-keys.html#Using_CreateAccessKey

Terraform Configuration Files Overview

When you execute the terraform commands, you should be within a directory containing terraform configuration files. Those files ending in a ‘.tf’ extension will be loaded by Terraform in alphabetical order.

Before we jump into our configuration files for our Jump box, it may be helpful to review a quick primer on the syntax here https://www.terraform.io/docs/configuration/syntax.html

& some more advanced features such as lookup here https://www.terraform.io/docs/configuration/interpolation.html

Most users of Terraform choose to make their configs modular resulting in multiple .tf files with names like main.tf, variables.tf and data.tf… This is not required…you can choose to put everything in one big Terraform file, but I caution you modularity/compartmentalization is always a better approach than one monolithic file. Let’s take a look at our main.tf

Main.tf

Typically if you only see one terraform config file, it is called main.tf, most commonly there will be at least one other file called variables.tf used specifically for providing values for variables used in other TF files such as main.tf. Let’s take a look at our main.tf file section by section.

Provider

The provider keyword is used to identify the platform (cloud) you will be talking to, whether it is AWS, or another cloud. In our case it is AWS, and define three configuration items, an access key, secret key, and a region all of which we are inserting variables for, which will later be looked up / translated into real values in variables.tf

provider "aws" {

access_key = "${var.aws_access_key_id}"

secret_key = "${var.aws_secret_access_key}"

region = "${var.aws_region}"

}

Resource aws_instance

This section defines a resource, which is an “aws_instance” that we are calling “jump_box”. We define all configuration requirements for that instance. Substituting variable names where necessary, and in some cases we just hard code the value. Notice we are attaching two security groups to the instance to allow for ICMP & SSH. We are also tagging our instance, which is critical an AWS environment so your administrators/teammates have some idea about the machine that is spun up and what it is used for.

resource "aws_instance" "jump_box" {

ami = "${lookup(var.ami_base, "centos-7")}"

instance_type = "t2.medium"

key_name = "${var.key_name}"

vpc_security_group_ids = [

"${lookup(var.security-groups, "allow-icmp-from-home")}",

"${lookup(var.security-groups, "allow-ssh-from-home")}"

]

subnet_id = "${element(var.subnets_private, 0)}"

root_block_device {

volume_size = 8

volume_type = "standard"

}

user_data = <<-EOF

#!/bin/bash

yum -y update

EOF

tags = {

Name = "${var.instance_name_prefix}-jump"

ApplicationName = "jump-box"

ApplicationRole = "ops"

Cluster = "${var.tags["Cluster"]}"

Environment = "${var.tags["Environment"]}"

Project = "${var.tags["Project"]}"

BusinessUnit = "${var.tags["BusinessUnit"]}"

OwnerEmail = "${var.tags["OwnerEmail"]}"

SupportEmail = "${var.tags["SupportEmail"]}"

}

}

Btw, the this resource type is provided by an AWS module found here https://www.terraform.io/docs/providers/aws/r/instance.html

You have to download the module using terraform get (which can be done once you write some config files and type terraform get 🙂 ).

Also, note the usage of ‘user_data’ here to update the machines packages at boot time. This is an AWS feature that is exposed through the AWS module in terraform.

Resource aws_security_group

Next we define a new security group (vs attaching an existing one in the above section). We are creating this new security group for other VM’s in the environment to attach later, such that it can be used to allow SSH from the Jump host to the VM’s in the environment.

Also notice under cidr_blocks we define a single IP address a /32 of our jump host…but more important is to notice how we determine that jump hosts IP address. Using .private_ip to access the attribute of the “jump_box” aws_instance we are creating/just created in AWS. That is pretty cool.

resource "aws_security_group" "jump_box_sg" {

name = "${var.instance_name_prefix}-allow-ssh-from-jumphost"

description = "Allow SSH from the jump host"

vpc_id = "${var.vpc_id}"

ingress {

from_port = 22

to_port = 22

protocol = "tcp"

cidr_blocks = ["${aws_instance.jump_box.private_ip}/32"]

}

tags = "${var.tags_infra_default}"

}

Resource aws_route53_record

The last entry in our main.tf creates a DNS entry for our jump host in Route53. Again notice we are specifying a name of jump. has a prefix to an entry, but the remainder of the FQDN is figured out by the lookup command. The lookup command is used to lookup values inside of a map. In this case the map is defined in our variables.tf that we will review next.

resource "aws_route53_record" "jump_box_dns" {

zone_id = "${lookup(var.route53_zone, "id")}"

type = "A"

ttl = "300"

name = "jump.${lookup(var.route53_zone, "name")}"

records = ["${aws_instance.jump_box.private_ip}"]

}

Variables.tf

I will attempt to match the section structure I used above for main.tf when explaining the variables in variables.tf though it is not really in as clear of a layout using sections.

Provider variables

When terraform is run it compiles all .tf files, and replaces any key that equals a variable, with the value it finds listed in the variables.tf file (in our case) with the variable keyword as a prefix. Notice that the first two variables are empty, they have no value defined. Why ? Terraform supports taking input at runtime, by leaving these values blank, Terraform will prompt us for the values. Region is pretty straight forward, default is the value returned and description in this case is really an unused value except as a comment.

variable "aws_access_key_id" {}

variable "aws_secret_access_key" {}

variable "aws_region" {

description = "AWS region to create resources in"

default = "us-east-1"

}

I would like to demonstrate the behavior of Terraform as described above, when the variables are left empty

➜ jump terraform plan

var.aws_access_key_id

Enter a value: aaaa

var.aws_secret_access_key

Enter a value: bbbb

Refreshing Terraform state in-memory prior to plan...

The refreshed state will be used to calculate this plan, but

will not be persisted to local or remote state storage.

...

At this phase you would enter your AWS key info, and terraform would ‘plan’ out your deployment. Meaning it would run through your configs, and print to your screen it’s plan, but not actually change any state in AWS. That is the difference between Terraform plan & Terraform apply.

Resource aws_instance variables

Here we define the values of our AMI, SSH Key, Instance prefix for the name, Tags, security groups, and subnets. Again this should be pretty straight forward, no magic here, just the use of string variables & maps where necessary.

variable "ami_base" {

description = "AWS AMIs for base images"

default = {

"centos-7" = "ami-2af1ca3d"

"ubuntu-14.04" = "ami-d79487c0"

}

}

variable "key_name" {

default = "tuxninja-rsa-2048"

}

variable "instance_name_prefix" {

default = "tuxlabs-"

}

variable "tags" {

type = "map"

default = {

ApplicationName = "Jump"

ApplicationRole = "jump box - bastion"

Cluster = "Jump"

Environment = "Dev"

Project = "Jump"

BusinessUnit = "TuxLabs"

OwnerEmail = "tuxninja@tuxlabs.com"

SupportEmail = "tuxninja@tuxlabs.com"

}

}

variable "tags_infra_default" {

type = "map"

default = {

ApplicationName = "Jump"

ApplicationRole = "jump box - bastion"

Cluster = "Jump"

Environment = "DEV"

Project = "Jump"

BusinessUnit = "TuxLabs"

OwnerEmail = "tuxninja@tuxlabs.com"

SupportEmail = "tuxninja@tuxlabs.com"

}

}

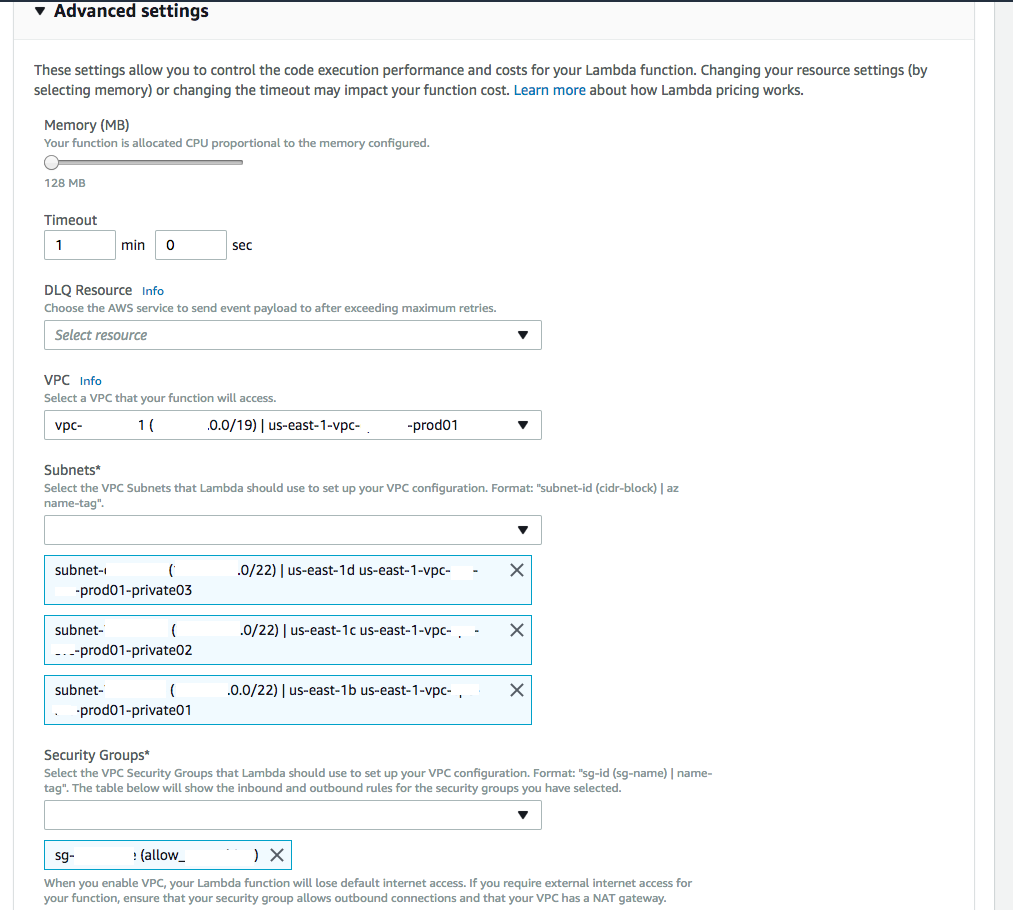

variable "security-groups" {

description = "maintained security groups"

default = {

"allow-icmp-from-home" = "sg-a1b75ddc"

"allow-ssh-from-home" = "sg-aab75dd7"

}

}

variable "vpc_id" {

description = "VPC us-east-1-vpc-tuxlabs-dev01"

default = "vpc-c229daa5"

}

variable "subnets_private" {

description = "Private subnets within us-east-1-vpc-tuxlabs-dev01 vpc"

default = ["subnet-78dfb852", "subnet-a67322d0", "subnet-7aa1cd22", "subnet-75005c48"]

}

variable "subnets_public" {

description = "Public subnets within us-east-1-vpc-tuxlabs-dev01 vpc"

default = ["subnet-7bdfb851", "subnet-a57322d3", "subnet-47a1cd1f", "subnet-73005c4e"]

}

It’s important to note the variables above are also used in other sections as needed, such as the aws_security_group section in main.tf …

Resource aws_route53_record variables

Here we define the ID & Name that are used in the ‘lookup’ functionality from our main.tf Route53 section above.

variable "route53_zone" {

description = "Route53 zone used for DNS records"

default = {

id = "Z1ME2RCUVBYEW2"

name = "tuxlabs.com"

}

}

It’s important to note Terraform or TF files do not care when or where things are loaded. All files are loaded and variables require no specific order consistent with any other part of the configuration. All that is required is that for each variable you try to insert a value for, it has a value listed via the variable keyword in a TF file somewhere.

Output.tf

Again, I want to remind folks you can put these terraform syntax in one file if you wanted to, but I choose to split things up for readability and simplicity. So we have an output.tf file specifically for the output command, there is only one command, which lists the results of our terraform configurations upon success.

output "jump-box-details" {

value = "${aws_route53_record.jump_box_dns.fqdn} - ${aws_instance.jump_box.private_ip} - ${aws_instance.jump_box.id} - ${aws_instance.jump_box.availability_zone}"

}

Ok so let’s run this and see how it looks…First a reminder, to test your config you can run Terraform plan first..It will tell you the changes its going to make…example

➜ jump terraform plan

var.aws_access_key_id

Enter a value: blahblah

var.aws_secret_access_key

Enter a value: blahblahblahblah

Refreshing Terraform state in-memory prior to plan...

The refreshed state will be used to calculate this plan, but

will not be persisted to local or remote state storage.

The Terraform execution plan has been generated and is shown below.

Resources are shown in alphabetical order for quick scanning. Green resources

will be created (or destroyed and then created if an existing resource

exists), yellow resources are being changed in-place, and red resources

will be destroyed. Cyan entries are data sources to be read.

Note: You didn't specify an "-out" parameter to save this plan, so when

"apply" is called, Terraform can't guarantee this is what will execute.

+ aws_instance.jump_box

ami: "ami-2af1ca3d"

associate_public_ip_address: "<computed>"

availability_zone: "<computed>"

ebs_block_device.#: "<computed>"

ephemeral_block_device.#: "<computed>"

instance_state: "<computed>"

instance_type: "t2.medium"

...

Plan: 3 to add, 0 to change, 0 to destroy.

If everything looks good & is green, you are ready to apply.

aws_security_group.jump_box_sg: Creation complete

aws_route53_record.jump_box_dns: Still creating... (10s elapsed)

aws_route53_record.jump_box_dns: Still creating... (20s elapsed)

aws_route53_record.jump_box_dns: Still creating... (30s elapsed)

aws_route53_record.jump_box_dns: Still creating... (40s elapsed)

aws_route53_record.jump_box_dns: Still creating... (50s elapsed)

aws_route53_record.jump_box_dns: Creation complete

Apply complete! Resources: 3 added, 0 changed, 0 destroyed.

The state of your infrastructure has been saved to the path

below. This state is required to modify and destroy your

infrastructure, so keep it safe. To inspect the complete state

use the `terraform show` command.

State path: terraform.tfstate

Outputs:

jump-box-details = jump.tuxlabs.com - 10.10.195.46 - i-037f5b15bce6cc16d - us-east-1b

➜ jump

Congratulations, you now have a jump box in AWS using Terraform. Remember to attach the required security group to each machine you want to grant access to, and start locking down your jump box / bastion and VM’s.

Outro

Remember if you take the above config and try to run it, swapping out only the variables it will error something about a required module. Downloading the required modules is as simple as typing ‘terraform get‘ , which I believe the error message even tells you 🙂

So again this was a brief intro to Terraform it does a lot & is extremely powerful. One of the thing I did when setting up a Mongo cluster using Terraform, was to take advantage of a map to change node count per region. So if you wanted to deploy a different number of instances in different regions, your config might look something like…

main.tf

count = "${var.region_instance_count[var.region_name]}"

variables.tf

variable "region_instance_count" {

type = "map"

default = {

us-east-1 = 2

us-west-2 = 1

eu-central-1 = 1

eu-west-1 = 1

}

}

It also supports split if you want to multi-value a string variable.

Another couple things before I forget, Terraform apply, doesn’t just set up new infrastructure, it also can be used to modify existing infrastructure, which is a very powerful feature. So if you have something deployed and want to make a change, terraform apply is your friend.

And finally, when you are done with the infrastructure you spun up or it’s time to bring her down… ‘terraform destroy’

➜ jump terraform destroy

Do you really want to destroy?

Terraform will delete all your managed infrastructure.

There is no undo. Only 'yes' will be accepted to confirm.

Enter a value: yes

var.aws_access_key_id

Enter a value: blahblah

var.aws_secret_access_key

Enter a value: blahblahblahblah

...

Destroy complete! Resources: 3 destroyed.

➜ jump

I hope this article helps.

Happy Terraforming 😉