Pre-requisites

This article is a continuation on the previous article I wrote on how to do a single node all-in-one (AIO) Openstack Icehouse install using Redhat’s packstack. A working Openstack AIO installation using packstack is required for this article. If you do not already have a functioning AIO install of Openstack please refer to the previous article before continuing on to this articles steps.

Preparing Our Compute Node

Much like in our previous article we first need to go through and setup our system and network properly to work with Openstack. I started with a minimal CentOS 6.5 install, and then configured the following

- resolv.conf

- sudoers

- my network interfaces eth0(192) and eth1 (10)

- Hostname: ruby.tuxlabs.com ( I also setup DNS for this )

- EXT IP: 192.168.1.11

- INT IP: 10.0.0.2

- A local user + added him to wheel for sudo

- I installed these handy dependencies

- yum install –y openssh–clients

- yum install –y yum–utils

- yum install –y wget

-

yum install –y bind–utils

-

And I disabled SELinux

- Don’t forget to reboot after

To see how I setup the above pre-requisites see the “Setting Up Our Initial System” section on the previous controller install here : http://tuxlabs.com/?p=82

Adding Our Compute Node Using PackStack

For starters we need to follow the steps in this link https://openstack.redhat.com/Adding_a_compute_node

I am including the link for reference, but you don’t have to click it as I will be listing the steps below.

On your controller node ( diamond.tuxlabs.com )

First, locate your answers file from your previous packstack all-in-one install.

[root@diamond tuxninja]# ls *answers*

packstack-answers-20140802-125113.txt

[root@diamond tuxninja]#

Edit the answers file

Change lo to eth1 (assuming that is your private 10. interface) for both CONFIG_NOVA_COMPUTE_PRIVIF & CONFIG_NOVA_NETWORK_PRIVIF

[root@diamond tuxninja]# egrep 'CONFIG_NOVA_COMPUTE_PRIVIF|CONFIG_NOVA_NETWORK_PRIVIF' packstack-answers-20140802-125113.txt

CONFIG_NOVA_COMPUTE_PRIVIF=eth1

CONFIG_NOVA_NETWORK_PRIVIF=eth1

[root@diamond tuxninja]#

Change CONFIG_COMPUTE_HOSTS to the ip address of the compute node you want to add. In our case ‘192.168.1.11’. Additionally, validate the ip address for CONFIG_NETWORK_HOSTS is your controller’s ip since you do not run a separate network node.

[root@diamond tuxninja]# egrep 'CONFIG_COMPUTE_HOSTS|CONFIG_NETWORK_HOSTS' packstack-answers-20140802-125113.txt

CONFIG_COMPUTE_HOSTS=192.168.1.11

CONFIG_NETWORK_HOSTS=192.168.1.10

[root@diamond tuxninja]#

That’s it. Now run packstack again on the controller

[tuxninja@diamond yum.repos.d]$ sudo packstack --answer-file=packstack-answers-20140802-125113.txt

When that completes, ssh into or switch terminals over to your compute node you just added.

On the compute node ( ruby.tuxlabs.com )

Validate that the relevant openstack compute services are running

[root@ruby ~]# openstack-status

== Nova services ==

openstack-nova-api: dead (disabled on boot)

openstack-nova-compute: active

openstack-nova-network: dead (disabled on boot)

openstack-nova-scheduler: dead (disabled on boot)

== neutron services ==

neutron-server: inactive (disabled on boot)

neutron-dhcp-agent: inactive (disabled on boot)

neutron-l3-agent: inactive (disabled on boot)

neutron-metadata-agent: inactive (disabled on boot)

neutron-lbaas-agent: inactive (disabled on boot)

neutron-openvswitch-agent: active

== Ceilometer services ==

openstack-ceilometer-api: dead (disabled on boot)

openstack-ceilometer-central: dead (disabled on boot)

openstack-ceilometer-compute: active

openstack-ceilometer-collector: dead (disabled on boot)

== Support services ==

libvirtd: active

openvswitch: active

messagebus: active

Warning novarc not sourced

[root@ruby ~]#

Back on the controller ( diamond.tuxlabs.com )

We should now be able to validate that ruby.tuxlabs.com has been added as a compute node hypervisor.

[tuxninja@diamond ~]$ sudo -s

[root@diamond tuxninja]# source keystonerc_admin

[root@diamond tuxninja(keystone_admin)]# nova hypervisor-list

+----+---------------------+

| ID | Hypervisor hostname |

+----+---------------------+

| 1 | diamond.tuxlabs.com |

| 2 | ruby.tuxlabs.com |

+----+---------------------+

[root@diamond tuxninja(keystone_admin)]# nova-manage service list

Binary Host Zone Status State Updated_At

nova-consoleauth diamond.tuxlabs.com internal enabled :-) 2014-10-12 20:48:34

nova-conductor diamond.tuxlabs.com internal enabled :-) 2014-10-12 20:48:35

nova-scheduler diamond.tuxlabs.com internal enabled :-) 2014-10-12 20:48:27

nova-compute diamond.tuxlabs.com nova enabled :-) 2014-10-12 20:48:32

nova-cert diamond.tuxlabs.com internal enabled :-) 2014-10-12 20:48:31

nova-compute ruby.tuxlabs.com nova enabled :-) 2014-10-12 20:48:35

[root@diamond tuxninja(keystone_admin)]#

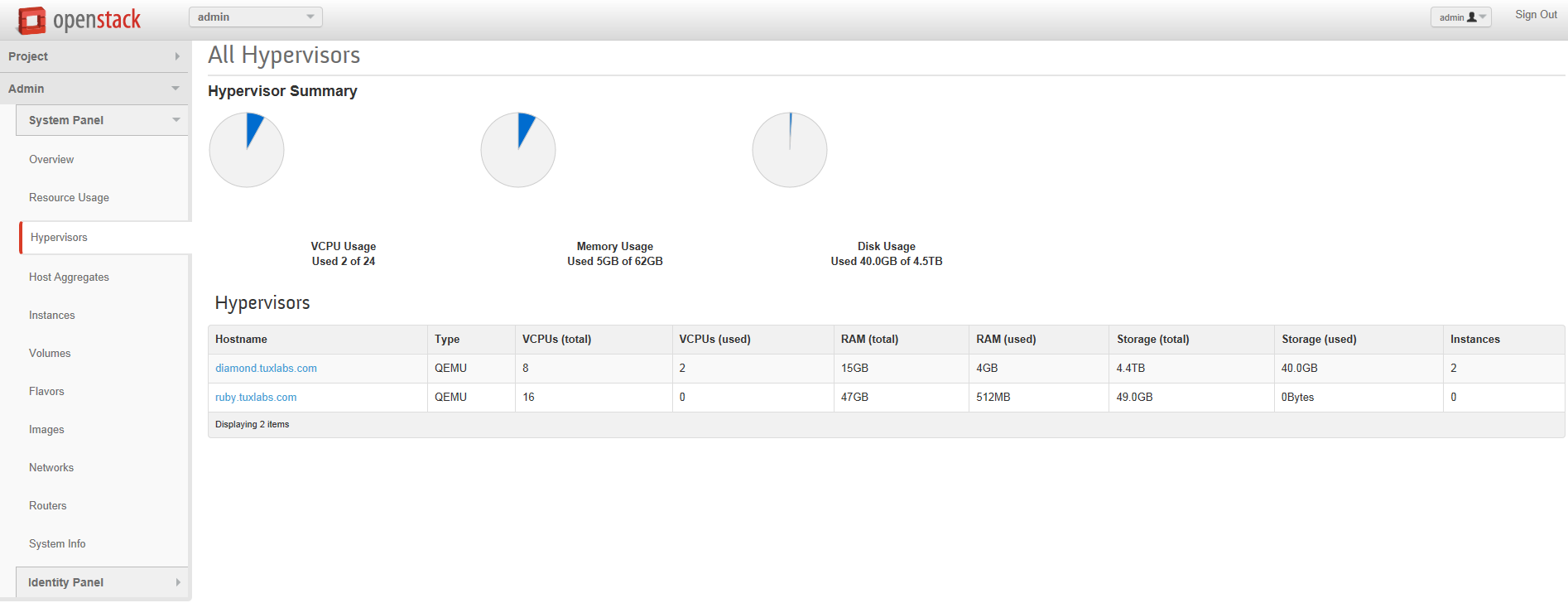

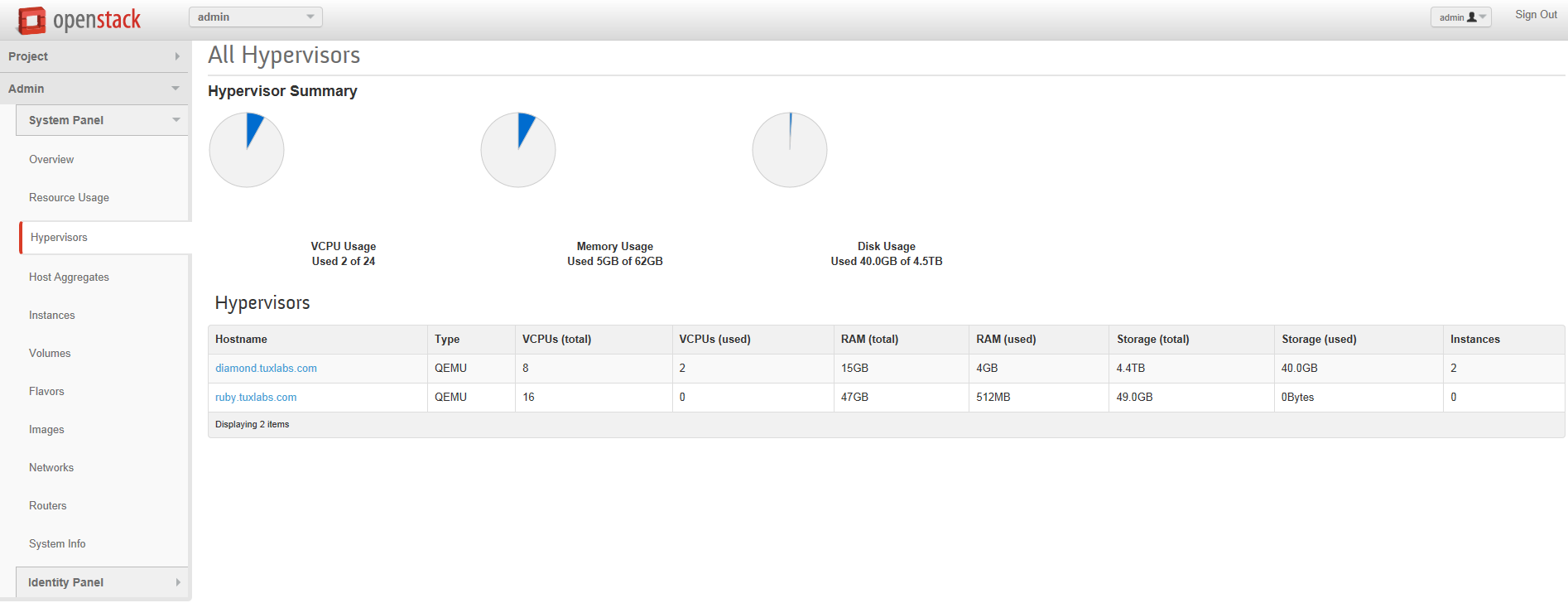

Additionally, you can verify it in the Openstack Dashboard

Next we are going to try to boot an instance using the new ruby.tuxlabs.com hypervisor. To do this we will need a few pieces of information. First let’s get our OS images list.

[root@diamond tuxninja(keystone_admin)]# glance image-list

+--------------------------------------+---------------------+-------------+------------------+-----------+--------+

| ID | Name | Disk Format | Container Format | Size | Status |

+--------------------------------------+---------------------+-------------+------------------+-----------+--------+

| 0b3f2474-73cc-4df2-ad0e-fdb7a7f7c8a1 | cirros | qcow2 | bare | 13147648 | active |

| 737a0060-6e80-415c-b66b-a20893d9888b | Fedora 6.4 | qcow2 | bare | 210829312 | active |

| 952ac512-19da-47a7-81a4-cfede18c7f45 | ubuntu-server-12.04 | qcow2 | bare | 260964864 | active |

+--------------------------------------+---------------------+-------------+------------------+-----------+--------+

[root@diamond tuxninja(keystone_admin)]#

Great, now we need the ID of our private network

[root@diamond tuxninja(keystone_admin)]# neutron net-show private

+---------------------------+--------------------------------------+

| Field | Value |

+---------------------------+--------------------------------------+

| admin_state_up | True |

| id | d1a89c10-0ae2-43f0-8cf2-f02c20e19618 |

| name | private |

| provider:network_type | vxlan |

| provider:physical_network | |

| provider:segmentation_id | 10 |

| router:external | False |

| shared | False |

| status | ACTIVE |

| subnets | b8760f9b-3c0a-47c7-a5af-9cb533242f5b |

| tenant_id | 7bdf35c08112447b8d2d78cdbbbcfa09 |

+---------------------------+--------------------------------------+

[root@diamond tuxninja(keystone_admin)]#

Ok now we are ready to proceed with the nova boot command.

[root@diamond tuxninja(keystone_admin)]# nova boot --flavor m1.small --image 'ubuntu-server-12.04' --key-name cloud --nic net-id=d1a89c10-0ae2-43f0-8cf2-f02c20e19618 --hint force_hosts=ruby.tuxlabs.com test

+--------------------------------------+------------------------------------------------------------+

| Property | Value |

+--------------------------------------+------------------------------------------------------------+

| OS-DCF:diskConfig | MANUAL |

| OS-EXT-AZ:availability_zone | nova |

| OS-EXT-SRV-ATTR:host | - |

| OS-EXT-SRV-ATTR:hypervisor_hostname | - |

| OS-EXT-SRV-ATTR:instance_name | instance-00000019 |

| OS-EXT-STS:power_state | 0 |

| OS-EXT-STS:task_state | scheduling |

| OS-EXT-STS:vm_state | building |

| OS-SRV-USG:launched_at | - |

| OS-SRV-USG:terminated_at | - |

| accessIPv4 | |

| accessIPv6 | |

| adminPass | XHUumC5YbE3J |

| config_drive | |

| created | 2014-10-12T20:59:47Z |

| flavor | m1.small (2) |

| hostId | |

| id | f7b9e8bb-df45-4b94-a896-5600f47c269b |

| image | ubuntu-server-12.04 (952ac512-19da-47a7-81a4-cfede18c7f45) |

| key_name | cloud |

| metadata | {} |

| name | test |

| os-extended-volumes:volumes_attached | [] |

| progress | 0 |

| security_groups | default |

| status | BUILD |

| tenant_id | 7bdf35c08112447b8d2d78cdbbbcfa09 |

| updated | 2014-10-12T20:59:47Z |

| user_id | 6bb8fcf3ce9446838e50a6b98fbb5afe |

+--------------------------------------+------------------------------------------------------------+

[root@diamond tuxninja(keystone_admin)]#

Fantastic. That command should look familiar from our previous tutorial it is the standard command for launching new VM instances using the command line, with one exception ‘–hint force_hosts=ruby.tuxlabs.com’ this part of the command line forces the scheduler to use ruby.tuxlabs.com as it’s hypervisor.

Once the VM is building we can validate that it is on the right hypervisor like so.

[root@diamond tuxninja(keystone_admin)]# nova hypervisor-servers ruby.tuxlabs.com

+--------------------------------------+-------------------+---------------+---------------------+

| ID | Name | Hypervisor ID | Hypervisor Hostname |

+--------------------------------------+-------------------+---------------+---------------------+

| f7b9e8bb-df45-4b94-a896-5600f47c269b | instance-00000019 | 2 | ruby.tuxlabs.com |

+--------------------------------------+-------------------+---------------+---------------------+

[root@diamond tuxninja(keystone_admin)]# nova hypervisor-servers diamond.tuxlabs.com

+--------------------------------------+-------------------+---------------+---------------------+

| ID | Name | Hypervisor ID | Hypervisor Hostname |

+--------------------------------------+-------------------+---------------+---------------------+

| a4c67465-d7ef-42b6-9c2a-439f3b13e841 | instance-00000017 | 1 | diamond.tuxlabs.com |

| 0c34028d-dfb6-4fdf-b9f7-daade66f2107 | instance-00000018 | 1 | diamond.tuxlabs.com |

+--------------------------------------+-------------------+---------------+---------------------+

[root@diamond tuxninja(keystone_admin)]#

You can see from the output above I have 2 VM’s on my existing controller ‘diamond.tuxlabs.com’ and the newly created instance is on ‘ruby.tuxlabs.com’ as instructed, awesome.

Now that you are sure you setup your compute node correctly, and can boot a VM on a specific hypervisor via command line, you might be wondering how this works using the GUI. The answer is a little differently 🙂

The Openstack Nova Scheduler

The Nova Scheduler in Openstack is responsible for determining, which compute node a VM should be created on. If you are familiar with VMware this is like DRS, except it only happens on initial creation, there is no rebalancing that happens as resources are consumed overtime. Using the Openstack Dashboard GUI I am unable to tell nova to boot off a specific hypervisor, to do that I have to use the command line above (if someone knows of a way to do this using the GUI let me know, I have a feeling if it is not added already, they will add the ability to send a hint to nova from the GUI in a later version). In theory you can trust the nova-scheduler service to automatically balance the usage of compute resources (CPU, Memory, Disk etc) based on it’s default configuration. However, if you want to ensure that certain VM’s live on certain hypervisors you will want to use the command line above. For more information on how the scheduler works see : http://cloudarchitectmusings.com/2013/06/26/openstack-for-vmware-admins-nova-compute-with-vsphere-part-2/

The End

That is all for now, hopefully this tutorial was helpful and accurately assisted you in expanding your Openstack compute resources & knowledge of Openstack. Until next time !